Locate large files or directories on Linux with bash

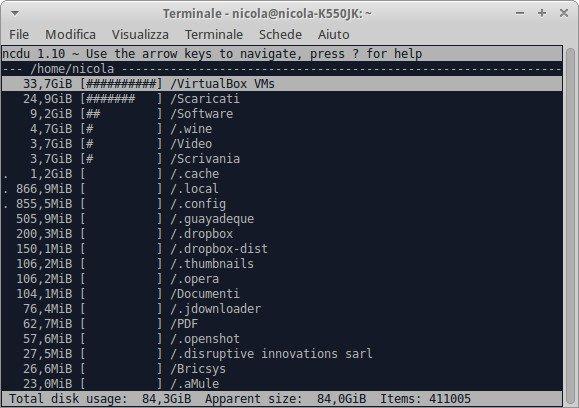

From a discussion on Super User, I have discovered an awesome tool that helps you to locate the largest files or directories in your computer, just typing a single command line on a bash terminal. I have only added some code to create a text file without the annoying message "Permission denied". In the article there is also a list of the most famous analyzers for linux.

Some time ago, I wasn't able to understand why my partition had been reduced itself in a short time. I knew of the existence of some software to analyse the disk usage with or without a GUI, but I want something new and more immediate. Nevertheless the first solutions found on Internet were a list of disk analyzers.

List of analyzers

Looking at the home page NCurses Disk Usage, I have discovered this list of disk usage analyzers:

- NCurses Disk Usage - Ncdu is a disk usage analyzer with an ncurses interface. It is designed to find space hogs on a remote server where you don't have an entire graphical setup available, but it is a useful tool even on regular desktop systems. Ncdu aims to be fast, simple and easy to use, and should be able to run in any minimal POSIX-like environment with ncurses installed.

- gt5 - Quite similar to ncdu, but a different approach.

- tdu - Another small ncurses-based disk usage visualization utility.

- TreeSize - GTK, using a treeview.

- Baobab - GTK, using pie-charts, a treeview and a treemap. Comes with GNOME.

- GdMap - GTK, with a treemap display.

- KDirStat - KDE, with a treemap display.

- QDiskUsage - Qt, using pie-charts.

- xdiskusage - FLTK, with a treemap display.

- fsv - 3D visualization.

- Philesight - Web-based clone of Filelight.

Many of them haven't received an update for a big while, but they browse your filesystem and see where the diskspace is being used at a glance.

My needs

The analyzers introduced above are good, but I wanted just an utility for finding the largest files (or directories) with the relative path, which could allow me to delete them easily. A list with the top 10 (or whatever number I need) items weighing more on my hard disk!

The solution

A discussion on Super User gave me a simple line command that fits perfectly my needs: Linux utility for finding the largest files/directories [closed]. After trying it, I noticed a lot of annoying messages due to permission rights and at the same time the screen of the terminal very crowded. To avoid this, I redirect the correct files or directories in a text file that I can look later.

Here is the terminal command line:

- To find the largest 10 files:

find . -type f -print0 | xargs -0 du | sort -n | tail -10 | cut -f2 | xargs -I{} du -sh {} | grep -v "Permission denied" > largest_files.txt && more largest_files.txt

- To find the largest 10 directories:

find . -type d -print0 | xargs -0 du | sort -n | tail -10 | cut -f2 | xargs -I{} du -sh {} | grep -v "Permission denied" > largest_directories.txt && more largest_directories.txt

Only difference is -type {d:f}, where d stands for directory and f for files; tail -10 means that I want know only the first 10 items. Finally you can use a specified path instead of the "." dot, in which the search is started from the current working directory and its recursive subfolders.

An example of my top 10 files, launched in my home directory and the winner is:

239M ./Software/pitivi-0 94-beta-x86/pitivi-0.94-x86_64 243M ./Software/pitivi-latest-x86/pitivi-latest-x86_64.tar 250M ./Video/screenoutput.mkv 278M ./Scaricati/Backup_Prisca/advert_environment.svg 307M ./Scaricati/Verificare/IperSpaceMaxPE-6.0.1.run 313M ./Scrivania/Temp/Articoli/Latex/Scanner/scanner_join2.ps 327M ./Scrivania/Temp/Review/Capitoli/C1/Anaconda3-2.2.0-Linux-x86_64.sh 706M ./Video/Analytics How Big Data Can Solve our Most Complex Problems.mp4 1,5G ./Scaricati/cuda-repo-ubuntu1404-7-0-local 7 0-28 amd64/cuda-repo-ubuntu1404-7-0-local_7.0-28_amd64.deb 34G ./VirtualBox VMs/Windows7Starter/Windows7Starter.vdi

Comments

una versione più corta

du -m -d 1 2> /dev/null | sort -gr

Add new comment